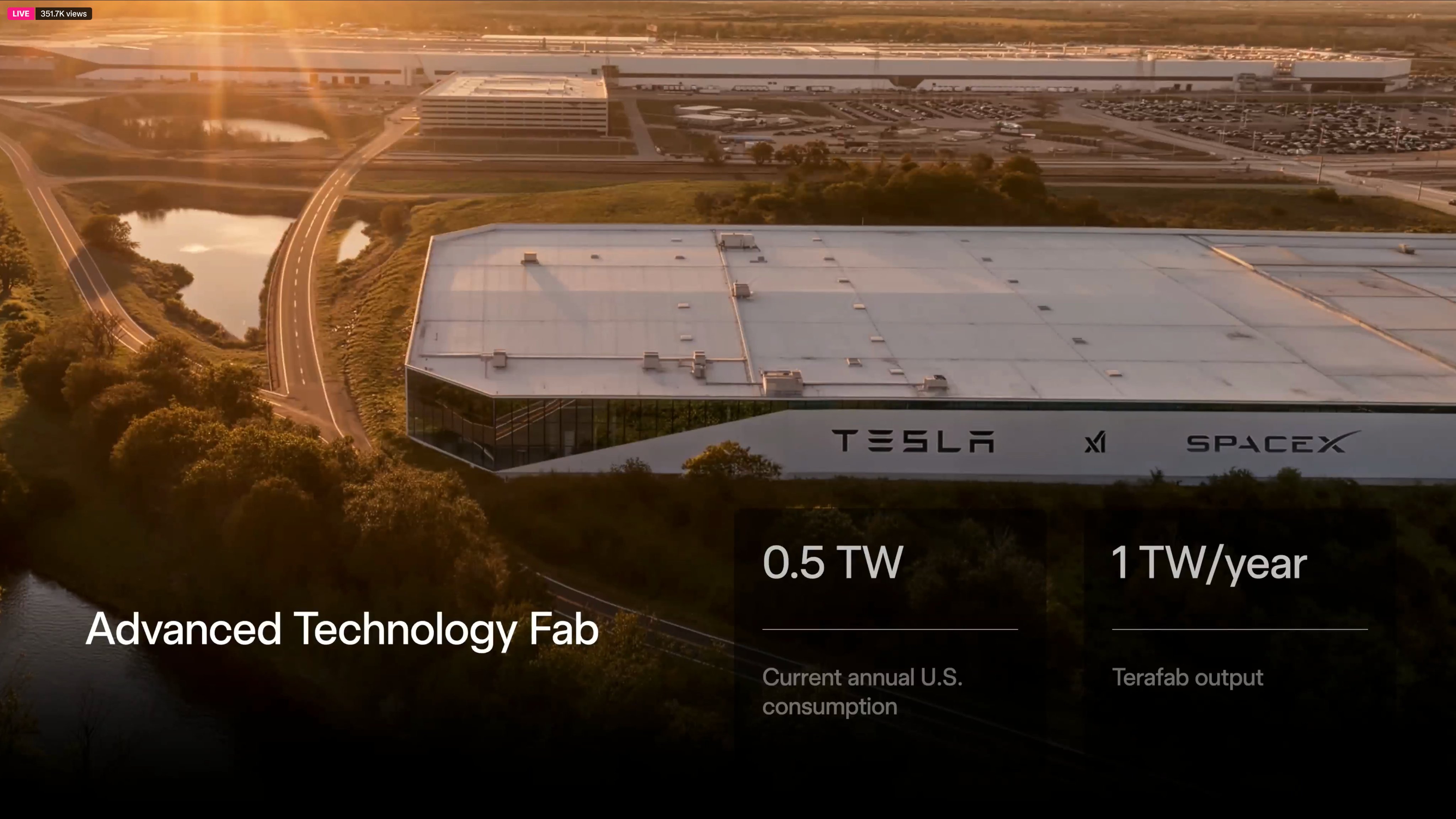

In a landmark announcement that blends Tesla’s AI ambitions with SpaceX’s space infrastructure expertise, the TERAFAB project has been formally unveiled. This joint venture aims to produce over one terawatt of compute per year — encompassing logic, memory, and advanced packaging — with approximately 80% allocated for space-based applications and 20% for terrestrial use. The initiative represents the ultimate expression of vertical integration: extreme AI software co-designed with custom AI hardware and semiconductor fabrication processes. This review breaks down the technical details, strategic implications, and engineering breakthroughs based on the official announcements and presentations.

The Vision Behind TERAFAB: Exploring the Universe Through AI Compute

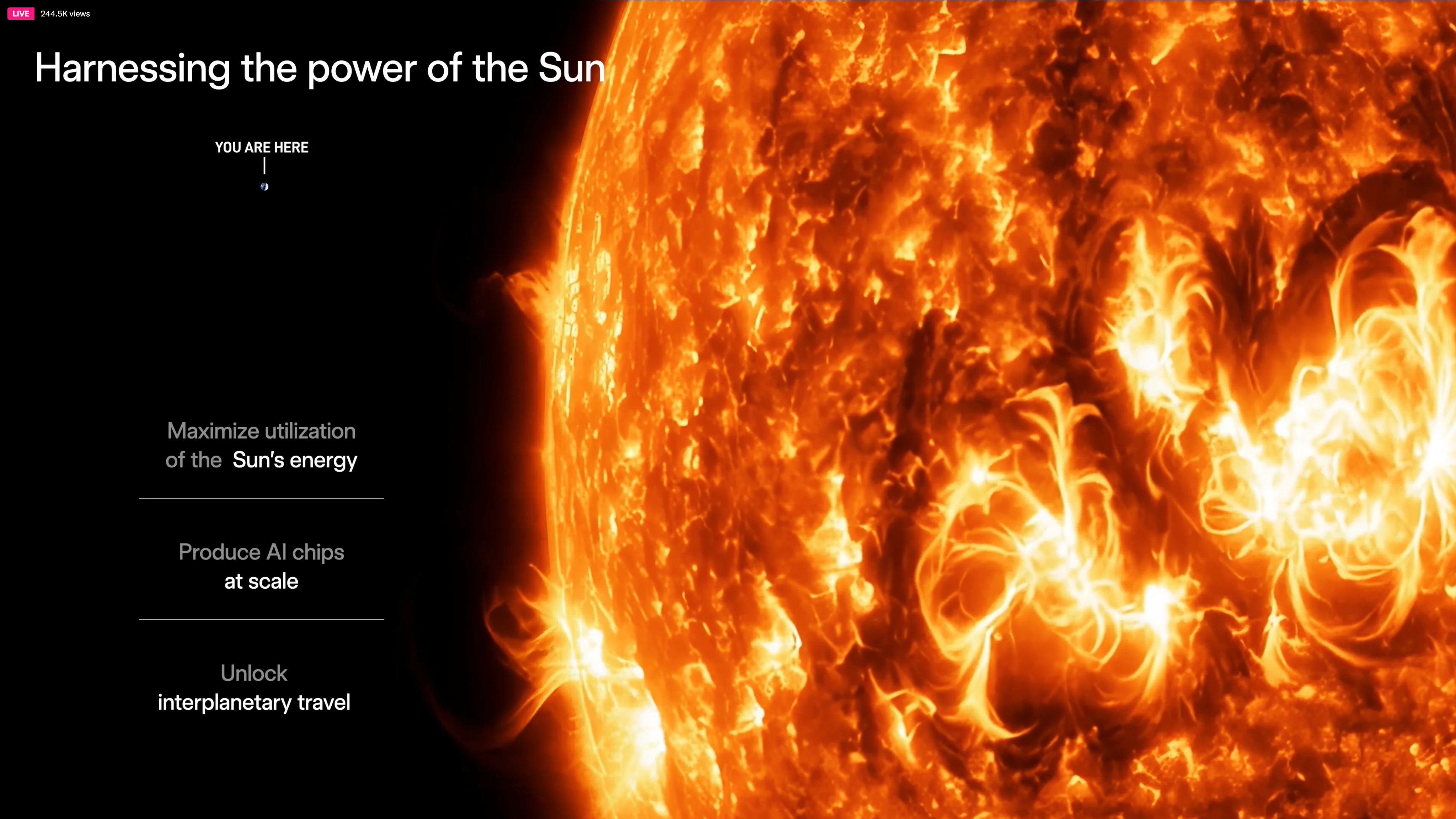

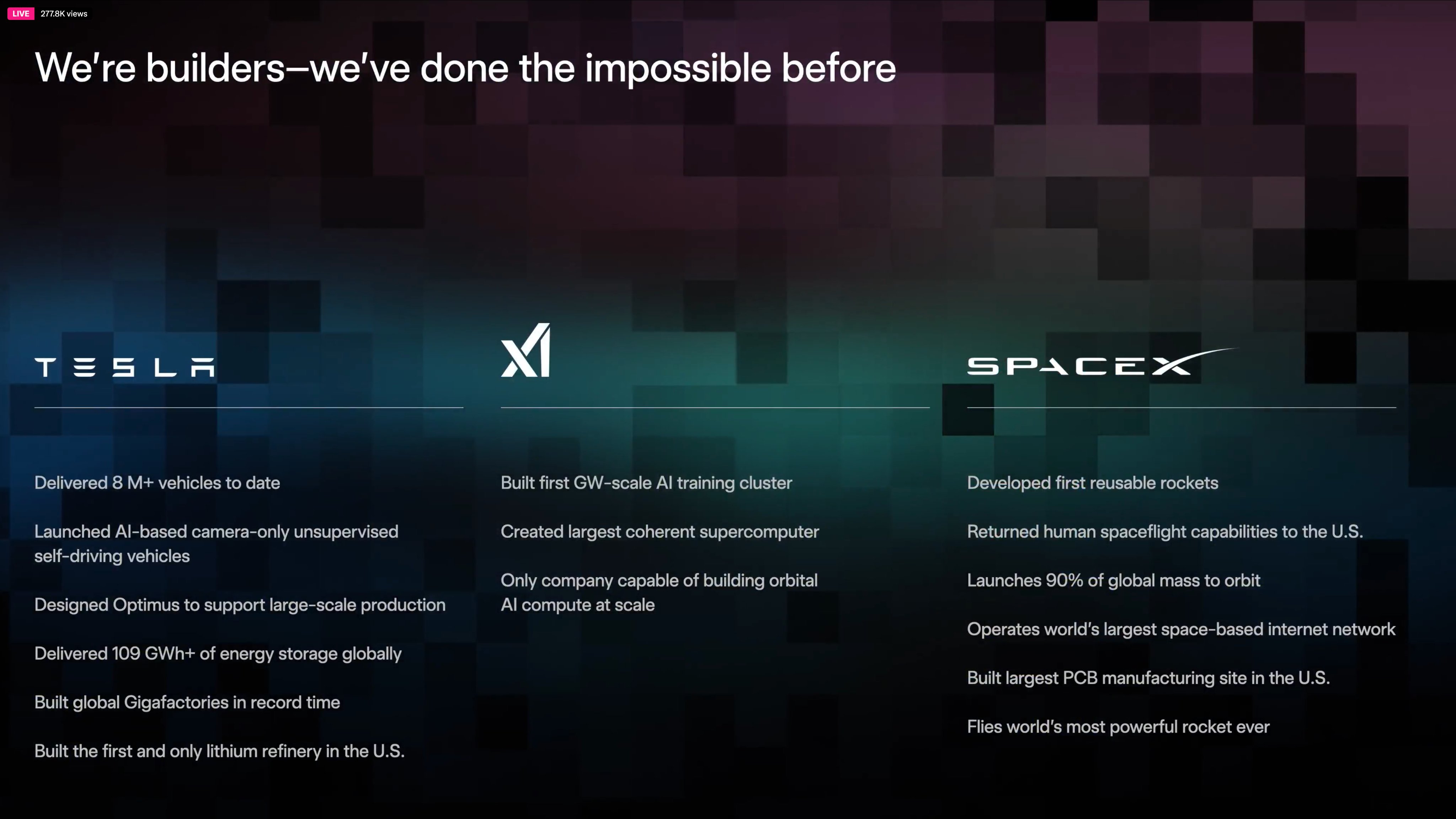

Tesla’s official statement captures the philosophical core: “In order to understand the universe, you must explore the universe.” TERAFAB is not merely a chip factory — it is the foundational infrastructure for building the massive AI compute clusters required to power next-generation autonomy, humanoid robotics, and ultimately space-based intelligence. By jointly developing this capability with SpaceX, Tesla is positioning itself to overcome the physical and economic limits of Earth-bound data centers.

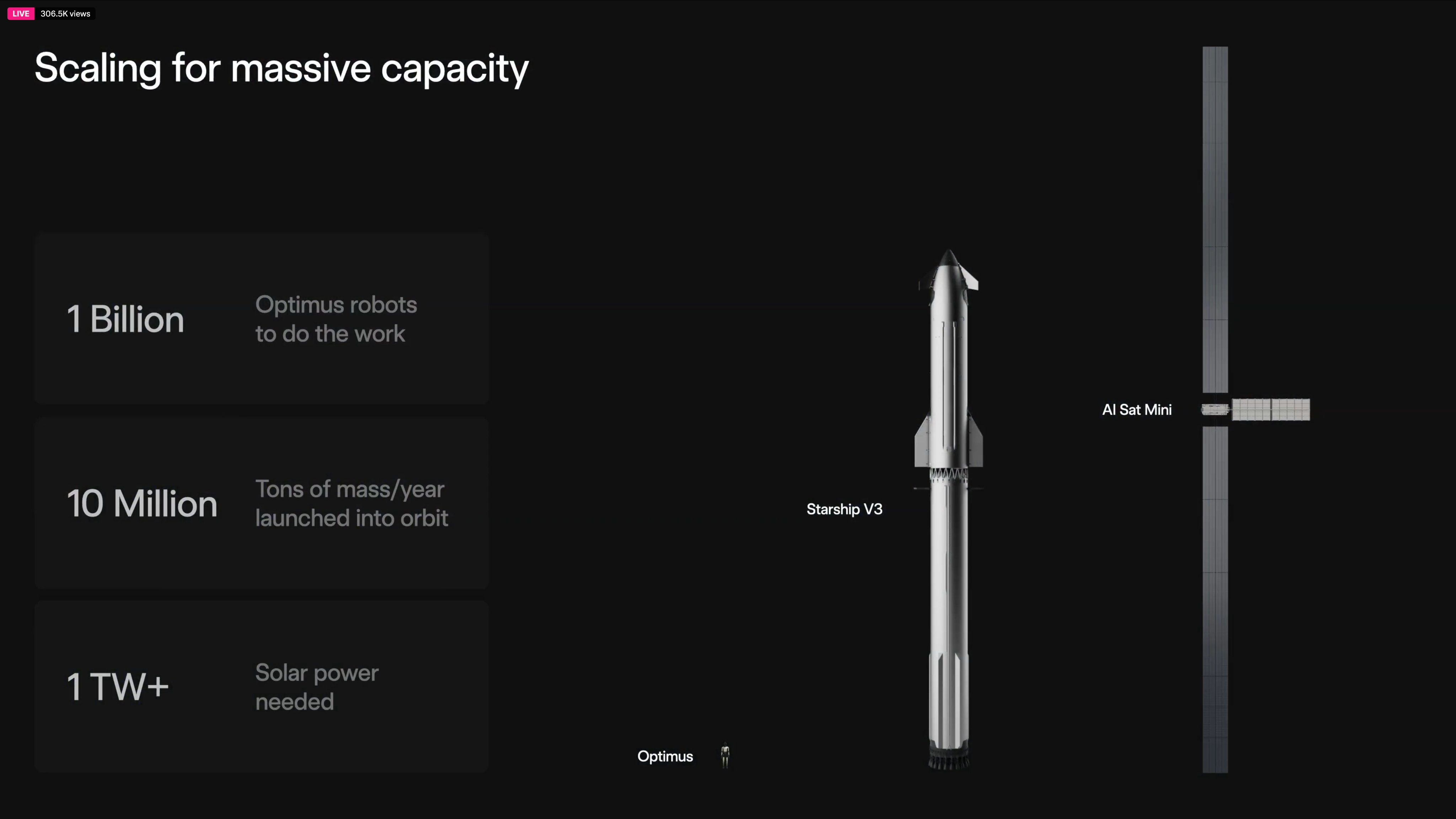

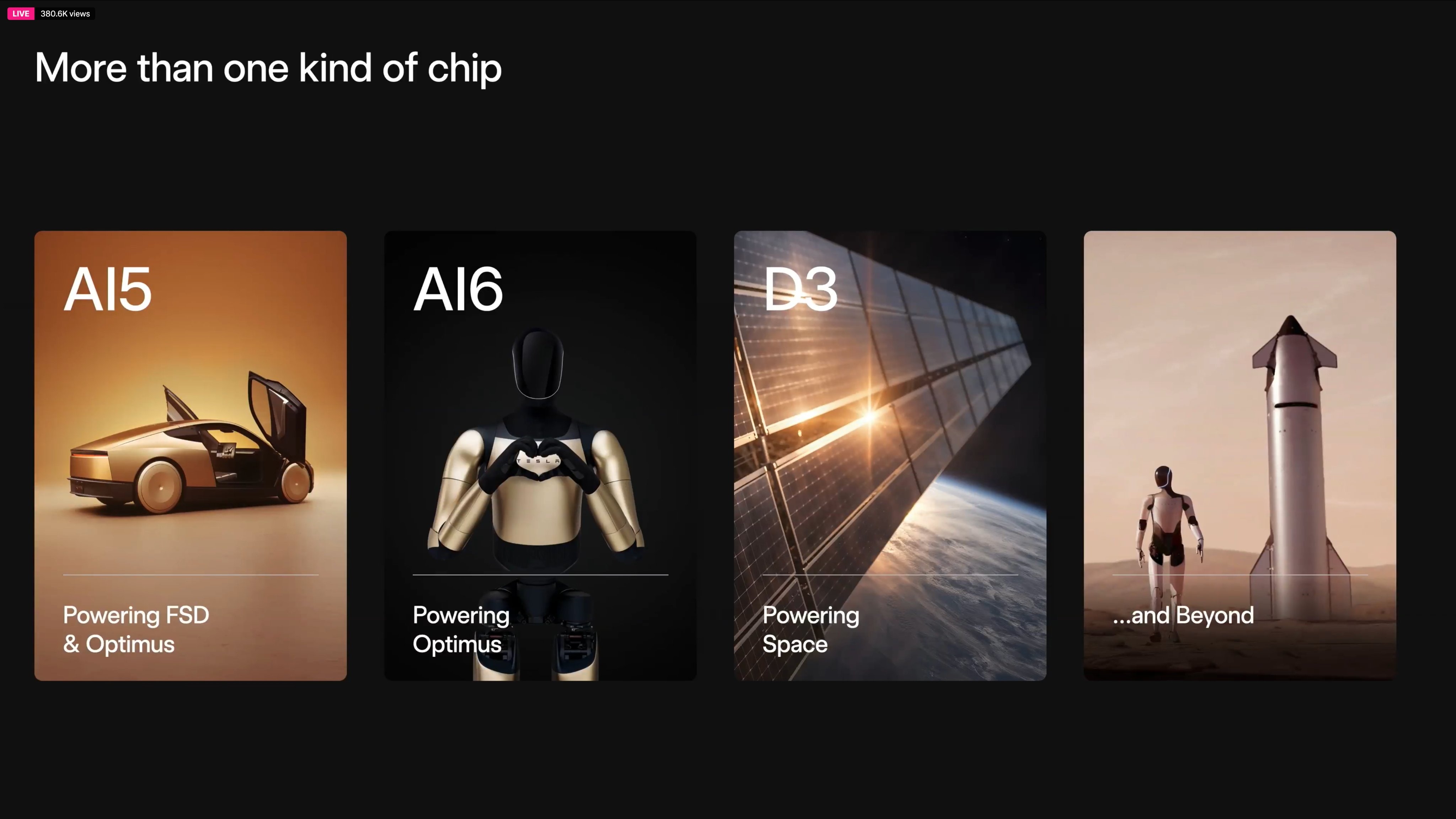

The project addresses the exploding demand for inference compute in Tesla’s Full Self-Driving (FSD) systems, Optimus humanoid robots, and xAI’s large-scale training clusters. Projections indicate that billions of Optimus units could eventually require inference chips at a scale that dwarfs current automotive needs.

Elon Musk’s Full TERAFAB Presentation: Key Technical Highlights

Elon Musk delivered a comprehensive overview of the TERAFAB roadmap during a livestreamed event. The presentation detailed the engineering rationale, power constraints, and manufacturing strategy that will enable terawatt-scale production. Here is a technical breakdown of the core elements presented:

- Inference Chip Architecture: A new high-efficiency inference chip optimized for both Tesla vehicles (FSD) and Optimus robots. Musk projected that Optimus will require at least 10× the chip volume of the entire Tesla vehicle fleet due to the anticipated deployment of over one billion humanoid robots.

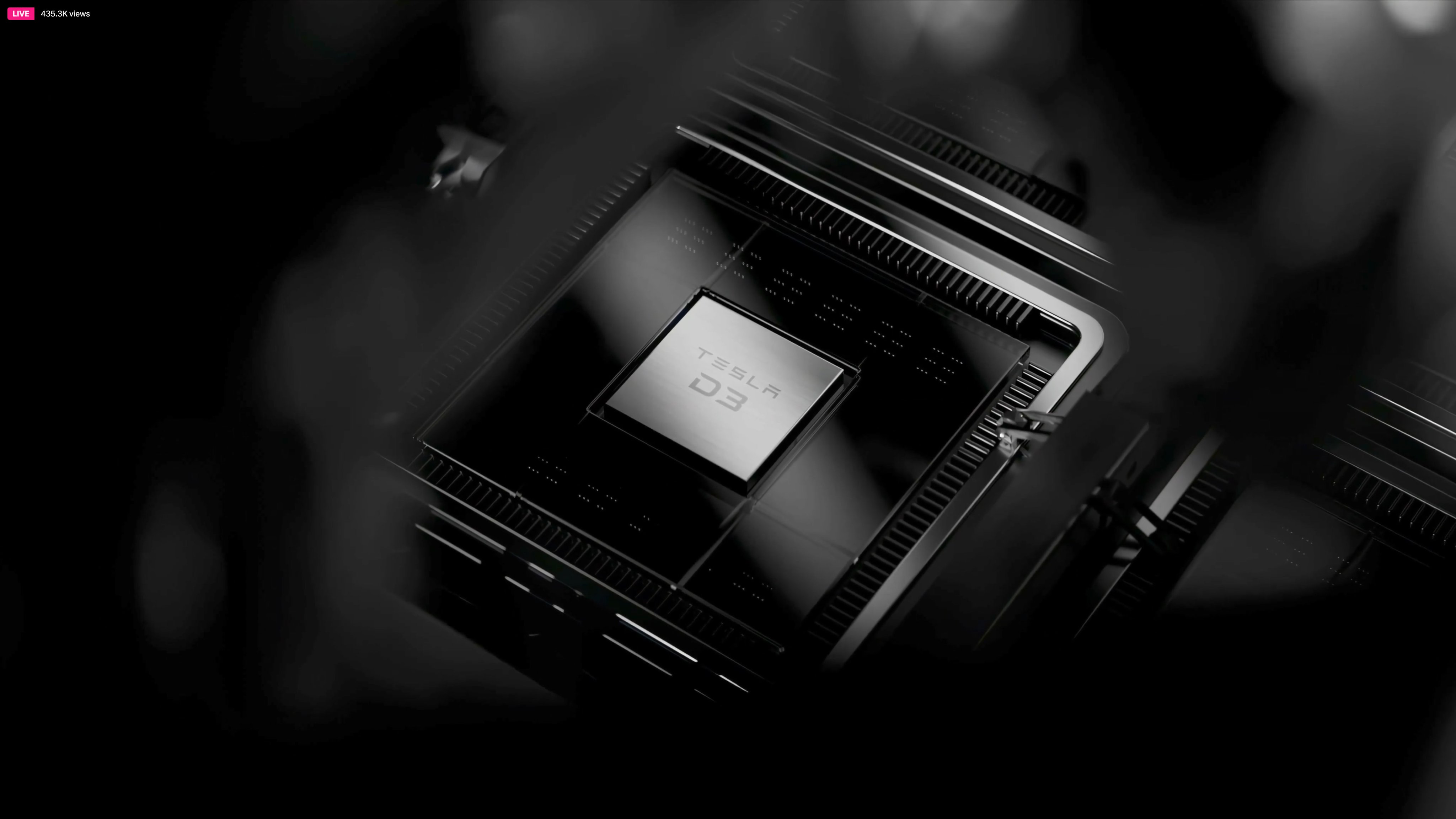

- Space-Optimized Inference Chip: Designed to operate at significantly higher temperatures than terrestrial counterparts. This reduces radiator mass and thermal management overhead in the vacuum of space, where heat dissipation is limited to radiation only.

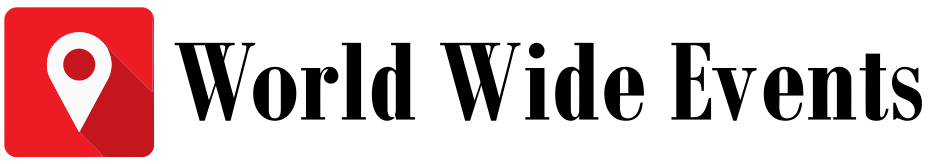

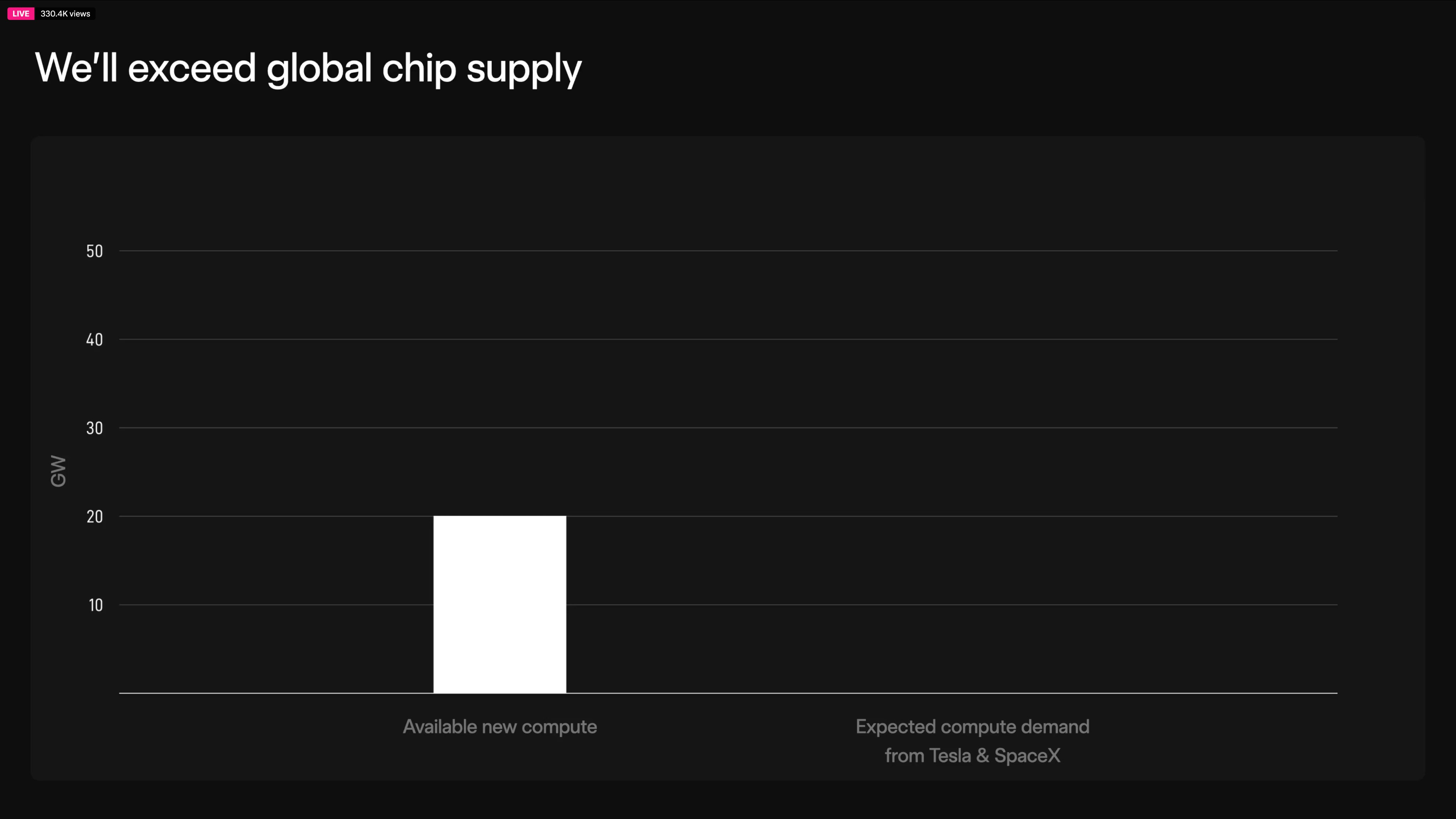

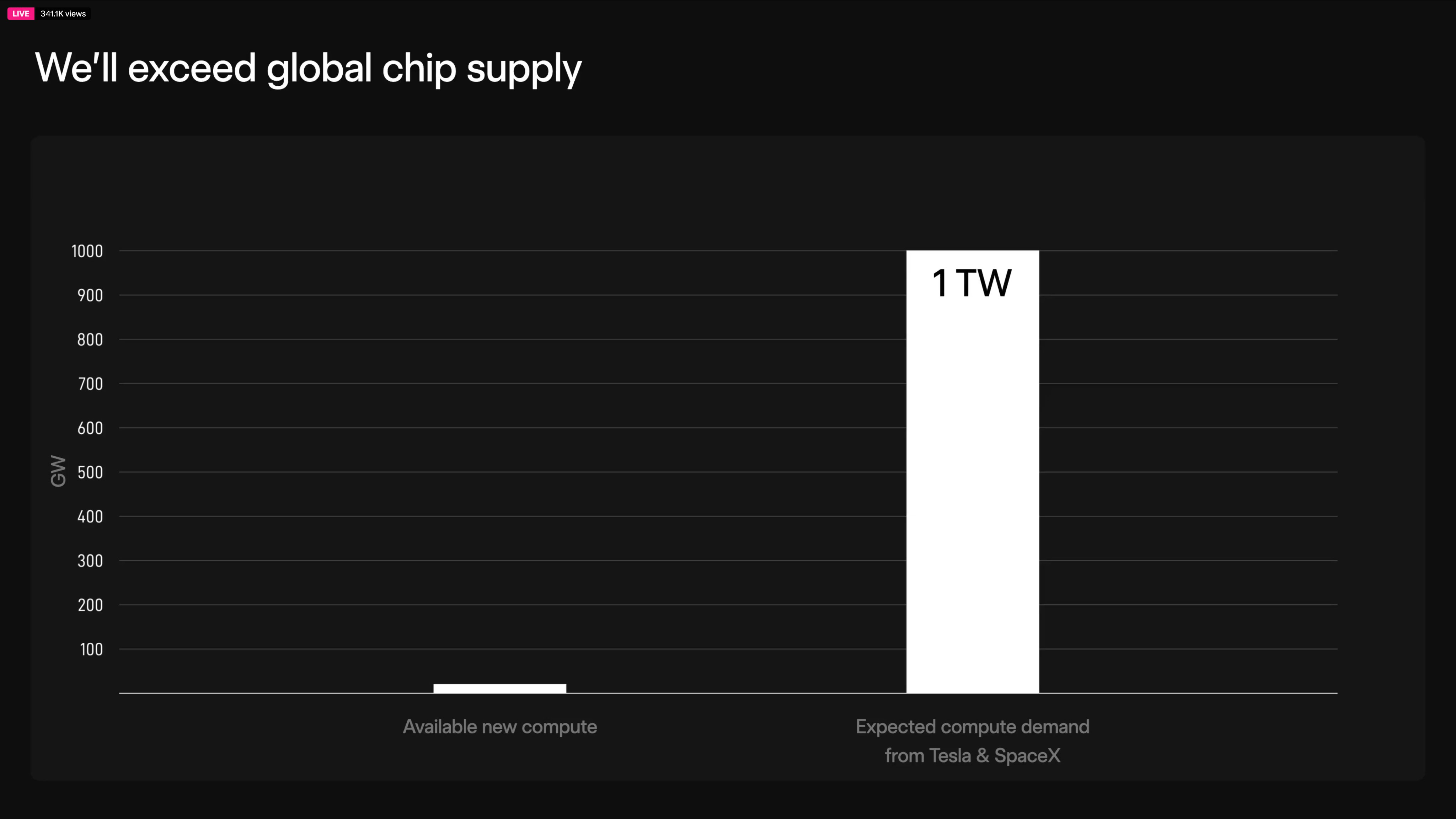

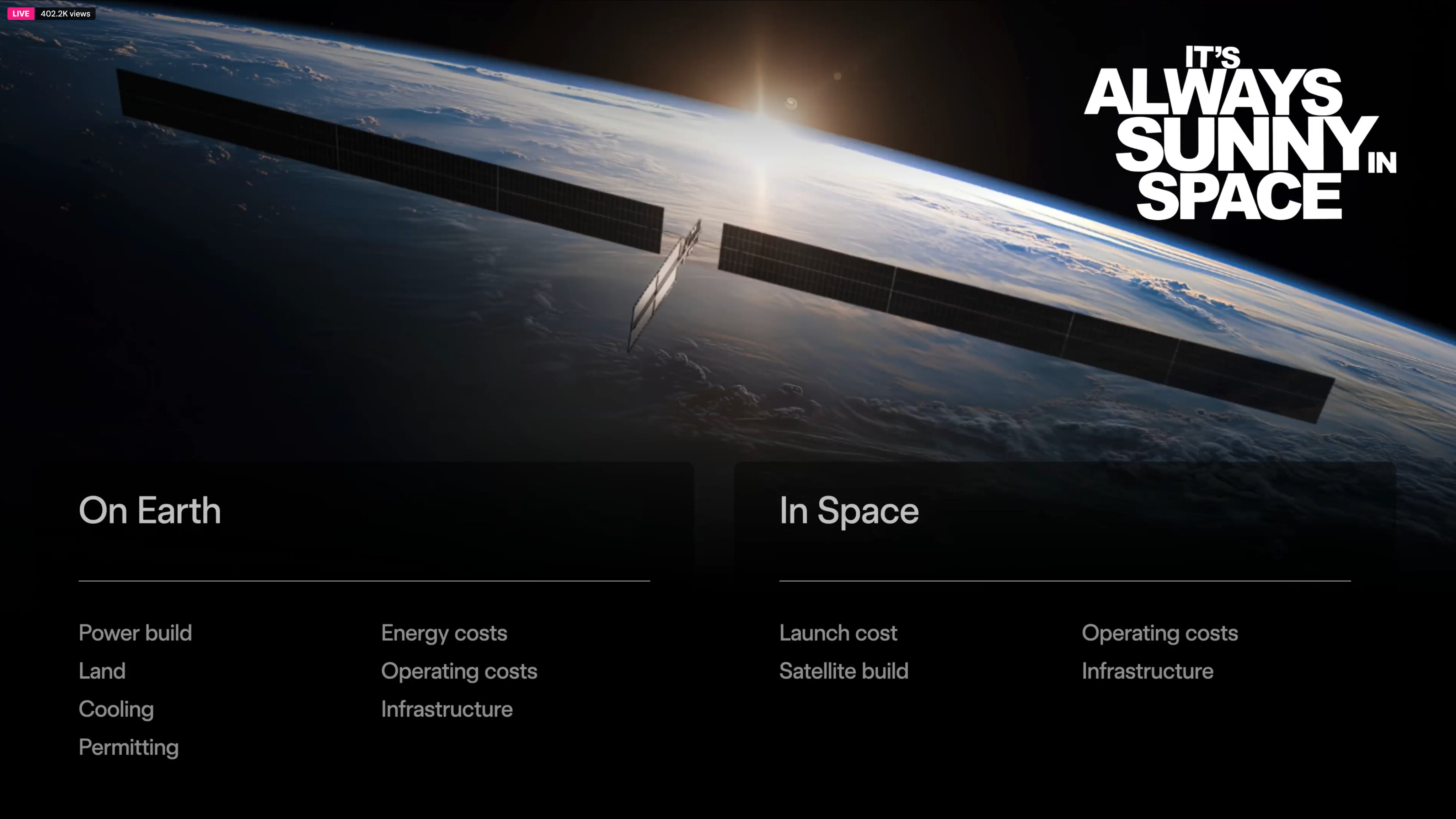

- Power Availability Advantage in Space: Solar energy yield in space is approximately 5× higher than on Earth due to the absence of atmospheric attenuation and weather. Musk forecasts terrestrial AI compute topping out at 100–200 GW due to siting, NIMBY restrictions, and grid constraints, while space-based AI compute could scale to 1 TW and beyond.

- Full-Stack Vertical Integration: From AI software algorithms through silicon design to in-house fabrication, every layer is co-optimized for maximum performance and efficiency.

- R&D-First Fab Strategy: TERAFAB begins as a captive semiconductor plant allowing rapid iteration on new device physics and process technologies — a direct parallel to Tesla’s original Gigafactory approach for batteries.

This presentation underscores that TERAFAB is not just capacity expansion — it is a fundamental shift in how AI hardware is developed and deployed at planetary and interplanetary scales.

Ashok Elluswamy on Extreme Co-Design: AI Software × Hardware × Fabrication

Tesla AI Director Ashok Elluswamy succinctly summarized the breakthrough: “Extreme AI software – AI hardware – Semiconductor fabrication co-design!” This triple integration creates a closed-loop optimization cycle that legacy foundries cannot replicate. Every neural network layer, every transistor layout, and every wafer process step is tuned simultaneously for the target workloads of autonomy and robotics.

The co-design approach enables:

- Custom silicon optimized for Tesla’s end-to-end neural networks rather than general-purpose GPUs.

- Reduced latency and power consumption critical for real-time inference in vehicles and robots.

- Accelerated iteration cycles — design changes can move from simulation to fabricated silicon in weeks instead of months.

- Future-proofing for “new physics” in device architectures that may emerge from internal R&D.

Technical Specifications and Engineering Innovations

While full die-level specifications remain under wraps, the announced parameters reveal a highly ambitious technical roadmap:

- Annual Compute Output Target: >1 terawatt (1,000 GW) of AI inference and training capacity once fully ramped.

- Allocation Split: 80% space-qualified chips for orbital data centers, 20% ground-based for Tesla factories, vehicles, and Optimus production.

- Chip Variants: Dual-track development — standard high-volume inference silicon for Earth and high-temperature, radiation-tolerant variants for space.

- Advanced Packaging: Integration of logic, memory, and interconnects under one roof to minimize data movement energy costs.

- Process Technology Focus: Rapid experimentation with next-node processes and novel device structures, bypassing traditional multi-year foundry cycles.

- Manufacturing Scale: Captive fab designed from the outset for terawatt-scale output, leveraging lessons from Gigafactory battery production.

Strategic Implications for Tesla, SpaceX, and Humanity’s Future

TERAFAB positions Tesla and SpaceX at the forefront of the AI-hardware arms race. Key implications include:

- Autonomy Acceleration: Unlimited inference chips will remove any hardware bottleneck for unsupervised FSD and robotaxi fleets.

- Optimus Mass Production: Billions of humanoid robots become feasible once inference compute is no longer supply-constrained.

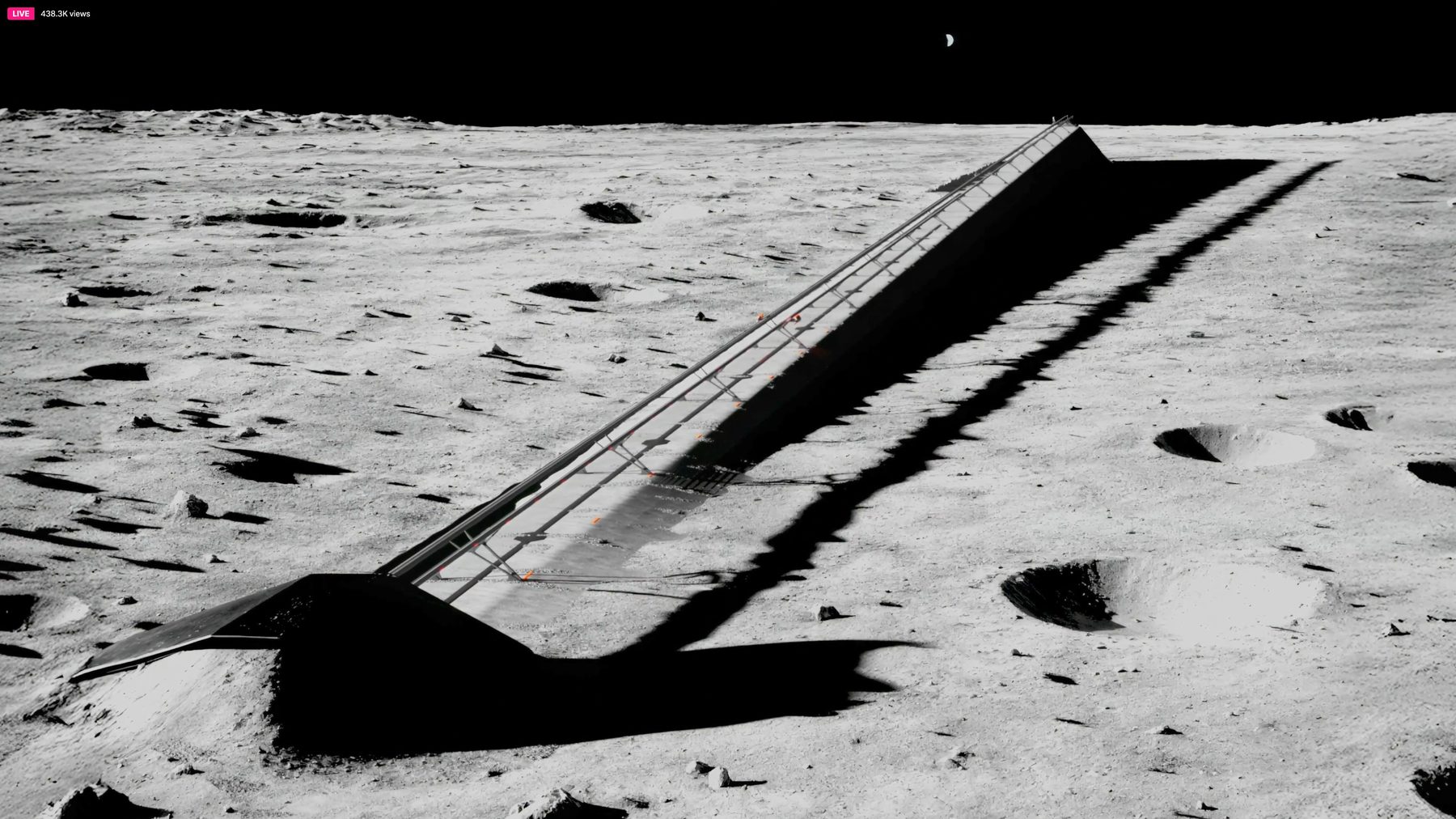

- Space-Based AI Infrastructure: Orbital data centers powered by abundant solar energy could host the largest training clusters ever conceived, free from terrestrial power and cooling limits.

- Competitive Moat: Full-stack co-design creates an unassailable advantage over companies reliant on third-party foundries like TSMC.

- Long-Term Exploration: By enabling massive space-based compute, TERAFAB directly supports the “understand the universe” mission through AI-driven scientific discovery across the solar system and beyond.

Challenges, Timeline, and Execution Risks

While visionary, TERAFAB faces significant engineering and execution hurdles:

- Semiconductor fabrication at this scale requires unprecedented capital expenditure and process yield ramp.

- Space-qualified chips must survive launch, vacuum, radiation, and thermal extremes for decades.

- Starship cadence must scale dramatically to deploy orbital data centers.

- Timeline estimates suggest multi-year development before full terawatt output, mirroring early Gigafactory challenges.

- Competition from global foundries continues to advance rapidly in parallel.

Nevertheless, Tesla’s track record of vertical integration — from batteries to motors to Dojo supercomputers — suggests the company is uniquely equipped to deliver.

Conclusion: The Dawn of Terawatt AI

TERAFAB is more than a new factory — it is the physical manifestation of Tesla’s and SpaceX’s shared mission to expand human consciousness and capability across the cosmos. By mastering the co-design of AI software, hardware, and fabrication, the project removes the final bottlenecks to truly abundant intelligence. As the first chips roll off the line and the first orbital clusters come online, we will witness the beginning of a new era: one where understanding the universe is no longer limited by compute scarcity but only by imagination.